Security situation awareness algorithm of network information transmission based on big data

In this section, after describing the characteristics of the dataset used in the research, the steps of the proposed method for secure information transfer in computer networks using big data processing techniques are explained.

Database

The present study, based on the CICIDS2017 dataset28, has been designed and implemented. Unlike most common datasets for network security purposes, this dataset describes instances in the form of network traffic flows. The inclusion of unprocessed traffic flows in this dataset has led to a highly realistic simulation of network performance in the real world. Thus, by designing and evaluating the proposed method based on the data provided in this dataset, we can ensure its performance accuracy in real-world scenarios.

In addition to simulating real-world conditions, the strengths of this dataset lie in its diverse coverage of communication protocols and the inclusion of a wide range of new security threats and attacks in computer networks, making it a suitable choice for the current research. The experimental network includes a wide range of devices such as routers, switches, firewalls, and modems, and user/attacker machines running operating systems such as Mac OS X, Ubuntu, and Windows. In this dataset, traffic flows are stored in PCAP format, and the activities of 25 users are covered based on protocols such as HTTP, HTTPS, FTP, SSH, and E-Mail. The traffic flows in this dataset were recorded over a 5-day period (from July 3, 2017, to July 7, 2017). The recorded flows on the first day include only normal (non-attack) traffic. The total number of normal instances in this dataset is 2,273,097. In the subsequent days, with the activation of a network of attackers consisting of 4 systems, various types of attack-related traffic flows were recorded. These attacks can be categorized as follows: Scan (158,930 instances, including Firewall Rule on and Firewall rules off), Bot (1,966 instances, including various Botnet ARES types), Heart-bleed (11 instances, including Heartbleed Port 444), Infiltrations (36 instances, including Dropbox download and Cool disk), DDoS (128,027 instances), and DoS (252,661 instances, including slowloris, Slowhttptest, Hulk, GoldenEye, and LOIT), Brute-Force (13,835 instances, including FTP-Patator and SSH-Patator), and Web-Based (2180 instances, including Web Attack – Brute Force, XSS, and SQL Injection). Further details of this dataset are elaborated in28.

Proposed methodology

Given the substantial volume of data described in the previous section, processing this data to form a robust detection model necessitates the utilization of big data processing techniques, which have been investigated in this study. This section focuses on explaining the process of analyzing network traffic flows for secure data transmission using machine learning techniques and big data processing. The proposed method includes the following key steps:

-

1.

Describing the features of traffic flows.

-

2.

Feature selection based on clustering and MI analysis.

-

3.

Distributed machine learning-based classification of traffic flows.

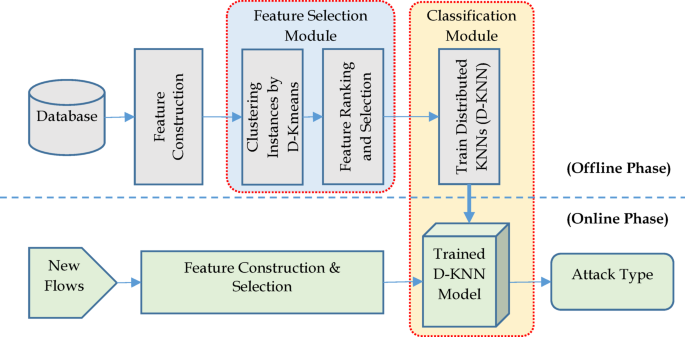

The computational stages of the proposed method are presented in Fig. 1.

Stages of the proposed approach.

As depicted in Fig. 1, the proposed framework comprises a combination of labeled traffic flow data (training set) and unlabeled data (test set), along with modules for feature construction, feature selection, and classification. In the first stage, each database instance is described by a feature vector composed of statistical features extracted from its traffic flow. Following the description of network traffic flow features through statistical and relational features, a feature selection strategy based on clustering is employed to reduce the dimensionality of the data. The goal of this step is to eliminate features unrelated to security threats in network flows, thereby enhancing detection accuracy while reducing hardware requirements for processing big data. To achieve this, in the feature selection step, the indicators extracted from traffic flows are first clustered using the Distributed KMeans algorithm (D-Kmeans). After this process, the data features are organized into clusters.

Next, MI is utilized to rank the indicators based on their relationship with the target variable. Subsequently, an iterative approach is employed to determine the set of relevant indicators based on the MI values for each category. The outcome of this process is a subset of descriptive traffic flow indicators that provide the highest level of useful information regarding security threats in the flow. In the third step of the proposed method, the selected indicators are used for classifying traffic flows. For this purpose, a D-KNN model is employed. This model can achieve high detection accuracy while meeting computational requirements for processing big data. After training the D-KNN model based on the training instances, it will be used to detect security threats in new traffic flows.

As shown in Fig. 1, the proposed architecture is executable in two phases: offline and online. The offline phase involves processes such as identifying the relevant indicator list and constructing the distributed learning model based on the training data. In the online (testing) phase, the trained model observes a set of unlabeled traffic flows, and after extracting relevant indicators from it, these indicator sets are analyzed by the trained model to identify security threats in the traffic flows.

In order to put our approach into perspective and explain its limitations of operation, we specify the following threat model and assumptions.

Threat model

The system will identify a network-based attacker who intends to breach the confidentiality, integrity, or availability of the network and the systems that are connected to it. We suppose that the attacker is working by creating malicious traffic flows that can be differentiated from benign traffic. The range of threats that can be detected is clearly matched with the contemporary attack vectors that are reflected in the CICIDS2017 dataset. The actions of the adversary are thus supposed to be categorized as the following:

-

Denial-of-Service (DoS/DDoS): Attacks that target to overwhelm server or network resources (e.g., Hulk, GoldenEye, Slowloris, Slowhttptest).

-

Brute-Force: Unauthorized access to services such as FTP and SSH, and this is done through automation.

-

Web-Based Attacks: Web application-based attacks like Cross-Site Scripting (XSS) and SQL Injection.

-

Scanning and Probing: Gathering of information to locate open ports and open services.

-

Infiltration and Botnets: Malware-based operations, which involve the creation of command and control connections and data theft.

We take as the baseline that these malicious actions produce measurable network flow anomalies (e.g., packet sizes, inter-arrival times, flow duration) that are detectable through our feature set.

Key assumptions

The proposed algorithm is evaluated in terms of performance and validity, assuming the following assumptions:

-

Labeled Data: At the offline training phase, we assume that we have an adequate and correctly labeled corpus of benign and malicious flows of network traffic flows available. This is because the performance of the supervised D-KNN model is determined by the quality of these labels.

-

Representativeness of Features: It is supposed that the set of engineered features is dense enough to capture the distinguishing features of malicious and normal network activity. We assume that the temporal, flow, and relational characteristics together give the required information to achieve the successful classification.

-

Environmental Consistency: We suppose that the network on which the model will be deployed has a traffic pattern (e.g., protocols, services) that is statistically close to the CICIDS2017 training environment. Major variations, or concept drift, may possibly impair the accuracy of detection.

-

Known Threat Patterns: The model will detect attacks that have patterns that are similar to the training set. It is not necessarily aimed at detecting entirely new so-called zero-day attacks that are statistically unrelated to any of the known types of attacks.

Describing traffic flow features

Raw data of the CICIDS2017 were subjected to a necessary preprocessing stage before feature extraction in order to guarantee the quality of data and model stability. Data cleaning was the initial step, and we managed to remove records that had missing (NaN) or infinite values, as this may interfere with the training of machine learning algorithms. All the numerical features in the data set were subjected to the Min–Max normalization method after cleaning. This is a normalization technique that normalizes each feature to a fixed range of [0, + 1], which is crucial to distance-based algorithms such as KNN. It makes sure that those features that have larger numeric ranges do not have a disproportionate impact on the performance of the model. Normalization of all the values X of a feature:

$${X}_{normalized} =\frac{X – {X}_{min}}{{X}_{max} – {X}_{min}}$$

(1)

with \({X}_{min}\) and \({X}_{max}\) represent the minimum and maximum of that attribute for all the records. This cleaned data was subsequently used in the subsequent feature engineering and selection.

The next step in the proposed approach involves describing each traffic flow using a fixed-length feature vector. In the proposed method, a labeled dataset of traffic flows is considered as the training data. To define the feature vector for each instance, statistical features of these instances are extracted in both directions of the flow, i.e., forward (client to server) and backward (server to client). This two-way analysis is important because network communications, particularly the malicious attacks, tend to be asymmetrical in nature. As an example, a DoS attack could have a large amount of requests going forward and little traffic going backward. The perspective of our model will be more detailed as it will calculate the statistical properties of each direction individually and, thus, will be capable of distinguishing between malicious and benign flows better. The proposed method utilizes two statistical groups of features and a set of relational features to describe a traffic flow:

-

Temporal features These features describe the statistical features of a traffic flow based on specific time points within the flow. These statistical features can define features related to a specific traffic flow instance and are extracted from randomly chosen time points along the connection. In these features, the time point for extracting feature k is determined as a random number with an exponential distribution and a mean of \({2}^{\text{k}}\) (seconds). For example, if k is set to 10, 10 random time points are selected with an exponential distribution and mean values ranging from \({2}^{1}\) (sec) to \({2}^{10}\) (sec). Subsequently, statistical features are extracted from these random time points. We refer to this group as “temporal features.”

-

Flow features These features are computed based on the overall features of a flow. They can also be useful in detecting network traffic types. Notably, these features do not require the flow to be completed. They represent statistical features up to the current time (unlike time-based features, which depend on specific time points). We refer to this group as “flow features.”

-

Relational features of the flow This set of features describes technical and communication specifications of the flow, such as source and destination port numbers, control flag counts, data volume-related features, etc. These features are extracted using the CICFlowMeter tool29. In total, 80 features are employed to describe traffic flows.

The set of features used for constructing feature vectors in the proposed method is detailed in Table 2.

Feature selection based on clustering and MI analysis

The second step in the proposed approach involves selecting an optimal subset of indicators relevant to security threats in network traffic flows. This is achieved by combining clustering techniques and MI analysis. The proposed feature selection strategy includes the following stages:

-

1.

Clustering of Training Instances In this stage, each indicator serves as a clustering criterion for categorizing the instances. The clustering algorithm used is a D-Kmeans, which adapts it to the computational requirements for large-scale data classification.

-

2.

Ranking Clustered Indicators After clustering, the importance of each clustered indicator is evaluated using MI to assess its relationship with security threat types.

-

3.

Final indicator Selection Finally, a forward sequential feature selection strategy is employed to choose indicators relevant to security threats. This strategy explores the problem space and considers the structure of the clustered indicators, aiming to maximize prediction accuracy.

The proposed feature selection process begins with clustering the indicators. Given the large volume of training data, the chosen clustering algorithm must be capable of handling big data. For N datasets, the initial K-Means algorithm with T iterations has a computational complexity of \(O(NKT)\), which is not suitable for big data processing applications. Therefore, in the proposed method, a DK-Means model30 is used for indicator clustering. In order to make our results replicable, we specified the parameters of the D-KMeans algorithm. K was empirically determined to be 15. This value was chosen due to the fact that it provided a reasonable clustering of features that are related in regards to functionality and did not result in either too granular or too broad clusters. The algorithm convergence is determined either by a maximum number of iterations, T = 100, or by the difference between the cluster centers of two successive iterations being less than a threshold of 0.001. This clustering algorithm leverages the Map-Reduce model for data categorization. In this computational model, the data is divided into N subsets, and the process of identifying cluster centers within each subset is performed independently. Subsequently, the identified centers for each data subset are merged, and after updating these centers, the final classification is determined. The steps of indicator clustering using the DK-Means algorithm are as follows31:

-

1.

Clustering of indicators In this step, the set of indicators is divided into n subsets.

-

2.

Random Initialization of K Centers For each data subset, an initial set of K centers is randomly generated.

-

3.

Computing Distances In each data subset, the distance between each instance and each of the K existing centers is calculated. In this step, the Euclidean distance metric is used.

-

4.

Data Reassignment Data points are reassigned to the nearest cluster until all data points are processed across all subsets.

-

5.

Collecting Data Records Data records are collected and processed in the form of data points and K clusters.

-

6.

Calculating New Centers The newly identified centers for each data subset are merged, and after adjusting these centers, the final classification is determined.

-

7.

Iterative Refinement The process is repeated from step 3 until the algorithm reaches either the T iteration threshold or the centers’ difference falls below the ϵ threshold in two consecutive iterations.

By executing the above steps, the indicators are placed into K clusters. Indicators within the same cluster exhibit greater similarity compared to indicators in other clusters. Consequently, some combinations of clustered indicators may lead to redundancy and provide repetitive information to the classification model. To address this issue, the proposed feature selection strategy employs MI ranking of indicators. Thus, the MI between each indicator, e.g., X, and the target variable Y is computed using Eq. (2)32:

$$MI\left(X,Y\right)=\sum_{x\in X}\sum_{y\in Y}p\left(x,y\right)\times log\left[\frac{p\left(x,y\right)}{p\left(x\right)\times p\left(y\right)}\right]$$

(2)

where the probability distribution for x is represented as \(p\left(x\right)=\text{Pr}\left\{X=x\right\},x\in X\). Based on this equation, indicators reflect useful information regarding patterns of variations in the target variable through higher MI values. Consequently, by selecting indicators with higher MI values, we can identify a relevant set of indicators. However, it is essential to minimize data redundancy. In other words, the selected indicators should not only provide useful information but also minimize the level of repetitive information available to the classifier. In the proposed method, to filter out indicators with redundancy, we utilize the results of clustering. As mentioned earlier, indicators within each cluster exhibit greater similarity compared to indicators in other clusters, which may increase the probability of redundancy.

Finally, we integrate the MI ranking with the cluster structure in a forward sequential selection method that is iterative to determine the optimal subset of features. It is an adaptive method that does not imply a specific MI threshold, but builds the set of features based on the classification performance. It functions in the following way:

-

1.

Ranking in Clusters The features in each of the K = 15 clusters are then ranked with the target variable (the type of attack) by MI in descending order.

-

2.

Iterative Selection and Evaluation The selection starts with the feature set empty. The algorithm takes into consideration the most important, but not chosen, feature of each cluster at every iteration. In order to determine the utility of each of these candidate features, a D-KNN classification model is trained and its performance is measured with the help of a fivefold cross-validation scheme.

-

3.

Decision and Stopping Criterion The feature of the candidate that causes the greatest reduction in the mean cross-validation error is permanently appended to the best feature set. This is repeated, and the clusters are cycled through so that a diverse and non-redundant set of features is selected. The whole selection procedure ends when none of the further feature additions of any cluster leads to a further decrease (i.e., reduction) of the cross-validation error of the model.

The resulting set of features is not only highly informative (high MI) but also as non-redundant as possible (by sampling features across clusters), which has a direct benefit to the predictive performance and computational performance of the model.

Distributed traffic flow classification based on machine learning

Having found a good subset of features, the last stage of our framework is to classify traffic flows. In this task, we chose the KNN algorithm. Although other models, such as Random Forest (RF) or SVM, can be potent, KNN was selected due to some important reasons. To begin with, its non-parametric character is very beneficial since it does not assume any underlying distribution of the data, which in many cases is complex and unpredictable in network traffic. Secondly, KNN has the reputation of being simple and effective at capturing complex, non-linear patterns in the feature space, which can often result in high classification accuracy.

We admit that the main disadvantage of the standard KNN is that it is computationally inefficient when applied to large datasets. Nevertheless, this challenge is directly alleviated by our proposed methodology in two aspects: (1) the above feature selection step dramatically decreases the dimensionality of the data, and (2) we apply KNN in a distributed manner (D-KNN). We posit that in the case of critical security applications, the promise of better accuracy that KNN can provide when scaled by this distributed architecture is an attractive trade-off.

In order to make our framework scalable and process big data, we used MATLAB R2020a to implement our framework. In the case of the distributed algorithms (D-KMeans and D-KNN), MATLAB was set to communicate with an Apache Spark-enabled cluster directly. By integrating it, we were able to utilize the powerful distributed computing engine provided by Spark, namely, using its Resilient Distributed Datasets (RDDs) to partition our data and its MLlib library to perform the underlying machine learning tasks. The cluster was set up with 1 master node and 4 worker nodes with 8 cores and 16 GB of RAM each, and the data was divided into 100 partitions to make full use of parallel processing. By doing so, the computationally intensive distance calculations, which are in synchronization with MATLAB, can be run in parallel on the cluster, and the total processing time is reduced by several orders of magnitude.

The D-KNN algorithm is modeled on the basis of the chosen features of the training instances and is applied in the process of determining the security threats in the network traffic flows during the prediction step. The algorithm is founded on the MapReduce model suggested in33 that includes two significant steps:

-

1.

Map Phase The training data is divided into the worker nodes. In every new test case that should be classified, the case is broadcast among all the nodes. Distances between the test instance and the local subset of training instances are calculated at each node, and each node calculates its local set of K-nearest neighbors.

-

2.

Reduce Phase All the lists of local K-nearest neighbors of each worker node are gathered by the master node. These lists are then merged by the master node, and lastly, the global top K-nearest neighbors are identified amongst all the candidates. Lastly, the test instance is assigned the final class label by a majority vote of such global neighbors.

Not only does this distributed solution improve the performance of the computations, but it also makes the system more robust since the failure of any worker node does not stop the whole classification process. Details of this classification model are given in33.

link